When it comes to measuring the performance of their information security programs, many CISOs stumble – not because of lack of effort, but because their aim is off the mark. CISOs need information that provides a clear picture of the threat landscape and potential operational and financial impacts. Having an effective cyber metrics program in place is fundamental to managing business risk and undertaking risk mitigation efforts. It can enable a CISO to shift the organization’s cybersecurity program from a controls orientation to one that addresses risk and impact on the organization’s bottom line.

Getting effective measures in place is an accomplishable effort, but it does require some forethought regarding what to measure and, more importantly, why. CISOs can start by asking themselves a few questions. First, how are other executives measuring and communicating their programs to the executives and board of directors? Second, are my metrics actionable, and how do I create a core step of metrics that incorporate risk, cost, value and business context? Finally, how do I tell my intended story using as few metrics as possible? Simplicity and clarity should be a standing goal for any metrics program, especially when distinguishing between operationally focused measures (such as the number of patches applied or vulnerabilities fixed) and the metrics that should populate executive-level dashboards and board reports (how long, on average, does it take to apply new patches, for example).

While information security metrics fall short of achieving efficacy due to a variety of shortcomings, most of these deficiencies ultimately result in the inability of information security stakeholders to make optimal decisions based on the information that a performance measure conveys. Some of the most common limitations we regularly help organizations correct include:

- Too much gut: A substantial portion of performance metrics are based on feeling: We’re in a good state right now, so we’ll present that as a metric. This approach – which is more widespread than one might expect – neglects a fundamental need to pull hard data from systems in a way that can be repeated and compared over long periods of time.

- Squishy numbers: The majority of performance metrics we see are overly qualitative, yet even measurement approaches that favor numeric ratings can lack quantitative rigor. A measure may be presented in quantitative manner – a four-point scale, for example – but a little poking into the process used to generate that figure exposes too much qualitative judgment and too little reliance on accepted industry standards.

- Limited coverage: In some cases where quantitative measures are being reported, the scope of that reporting is not broad enough. Measures that capture patching compliance or percentages of antivirus loaded on devices may reflect a relatively small portion of the company’s entire network. In those cases, what looks like an exceedingly positive measure (98% of devices have up-to-date antivirus software) can mask major risks.

- Limited duration: Some organizations have metrics that are effective but don’t cover a sufficient amount of time. Comparing measures on a month-to-month basis is not enough; year-to-year (or even longer) comparisons are needed to make sound decisions about information security investments and improvements.

- Apples to bananas: This pitfall – switching out measures too frequently – often arises when CISOs implement performance measures for the first time. This practice results in apples-to-oranges-to-bananas comparisons that produces too much noise, especially to board and executive-level audiences. It is important to set a baseline. And, on that count, simplicity and clarity are welcome.

Setting baseline measures does not require intricately designed metrics, only foundational ones that answer important questions. Performance metrics should help CISOs, their operational colleagues and senior leaders understand what needs to be done differently tomorrow to strengthen cybersecurity and reduce risk across the business. Developing measures that help crystalize that understanding starts by addressing some sweeping and vital questions, such as: How much cyber security is enough for an organization? What story do we want the metrics to tell? Does this tell us whether we’re investing in the right places? Are we improving our program?

The SMART acronym can help CISOs perform a relatively quick assessment of their current approach by asking whether the metrics they use are:

- Specific

- Measurable

- Attainable

- Relevant

- Timely

These types of questions spark discussions and realizations that should ultimately produce more effective and audience-relevant metrics. Your audience, including senior leadership and the board, wants a vivid snapshot that depicts the business risks that exist and what actions are being taken to strengthen the program (and therefore reduce the business risks). This snapshot tends to require summary-level metrics that provide insights about the overall information security program. Operational metrics also should provide clarity on risks, but those risks should be couched at a more detailed level that ties to specific actions operational groups can take to mitigate the risks.

Leading information security programs also present performance information differently to different audiences. Board committees receive slide decks whose length and structure reflects their information-gleaning preferences. Operational units may get reports that make greater use of data visualization.

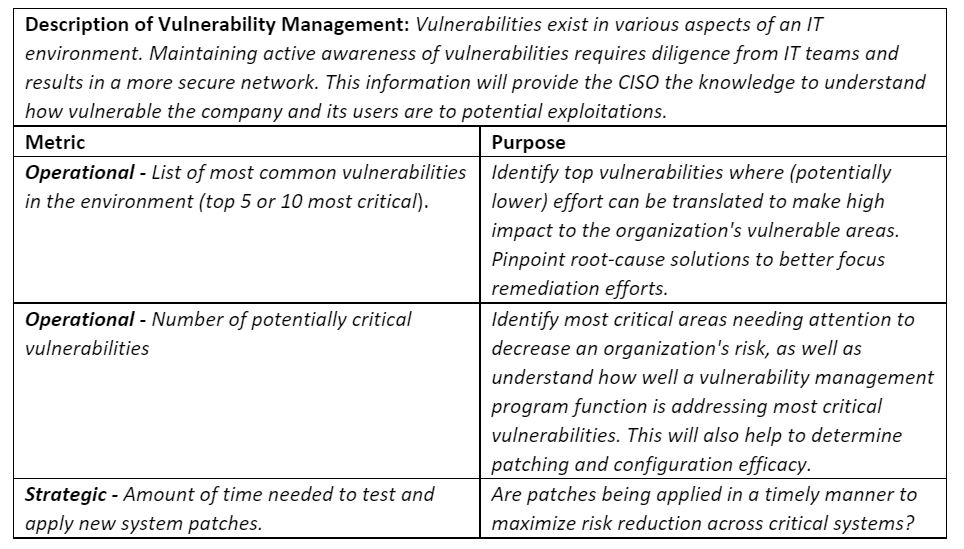

Given that vulnerability management is a common board-level concern, here’s an example of how that topic can be reported with specific metrics:

Each cyber program domain should have a core set of operational metrics and a smaller set of strategic metrics. Common domain areas include identity and access management, GRC (governance, risk and compliance), security operations (monitoring, detection and response), cyber resiliency (business continuity and disaster recovery), application security and engineering.

While a couple of industries with a legacy of cybersecurity efficacy – financial services comes to mind first – generally lead the way in getting effective performance metrics in place, efficacy varies from program to program. Organizations that have suffered cybersecurity breaches tend to ramp up their metrics in response. CISOs should interact with and share performance metrics and prospective reports with their chief risk officers. Cyber risks should always be categorized as a business risk, since they affect the business.

The vast majority of CISOs are putting the necessary effort into measuring the performance of their programs. By enhancing those efforts through more effective communication methods, defining actionable metrics as business risks, value and business context, and emphasizing simplicity and clarity, security leaders can agree on signals that convey to executives at all levels of the organization what actions and investments will improve information security performance and efficacy.

To learn more about our CISO Next program, contact us.