DevOps is dead – or so the story goes. This statement is echoed more and more these days, much to the dismay of the DevOps faithful, however technologists need to be careful to read between the lines. DevOps is most certainly NOT dead.

DevOps is more alive than ever, although the connotation of the term DevOps continues to evolve. The key point remains: as software development teams move past legacy methodologies and focus purely on modern delivery models, DevOps has simply become the common way things are done.

Today’s elite enterprises have been busy working on deeper solutions to the challenges of developing high quality software at scale, whilst accelerating velocity and maintaining compliance. Optimizing results across these three components concurrently sounds like a monumental achievement, but again, this is just the commonality of the day. Enter platform engineering and the concept of the internal developer portal (IDP).

A higher payoff – introducing the platform engineering team

Software engineering and feature development have evolved quite a bit since the advent of Agile and DevOps methodologies. Early Agile and DevOps practices such as eXtreme Programming (XP) along with a host of toolsets that have sprung up to support Agile, DevOps and other such leading practices in software development. Platform engineering as a discipline intends to centralize and standardize use of these toolsets, providing a common platform to serve developer communities’ infrastructure, automation, security and observability needs.

Elite performers in the DevOps space have been reporting incredible results-driven success stories for some time now, enjoying benefits such as significantly higher agility, drastically reduced time to implement, reduced change failures and less repetitive work — resulting in faster time-to-market, increased security and resiliency, happier development teams and higher employee retention.

Newer generations of software delivery and development teams have been utilizing DevOps and platform engineering concepts for years now without even realizing it. Elite firms have been scaling these leading practices to gain even more economies of scale, by developing and deploying accelerators such as internal developer platforms (IDPs) and employing a platform engineering team as a part of their DevOps practice, or infrastructure team.

Once an IT organization reaches a certain critical mass, for example, 10+ developers, the organization should instinctively begin to consider the centralization of certain functions into a specialized team that serves all development teams. This team does not develop application-layer code; rather, they serve the developers to eliminate non-application layer development work. Enter the platform engineering team. This team achieves economies of scale for the organization by standardizing, centralizing and automating routine activities that all developers would otherwise be required to do. By turning these activities into service offerings utilizing automation, these offerings can be presented via a user portal and sent to abstraction layers for provisioning and deployment, aka, the IDP.

IDPs enable these benefits by providing self service capabilities as platform engineering teams typically support larger groups of developers. The larger the group of development team(s), the higher the payoff. IDPs provide self-service capabilities for commonly used services, eliminating the need for large teams managing repetitive tasks to serve the development teams and other operations processes common to all users, including non-developers. For example, access provisioning can be automated such that developers can submit requests for identify and access management requests using the same automation that can be made available to the entire enterprise’s user community.

Complex services such as IP address management (IPAM), DNS registrations, virtual machine provisioning, storage device creation & allocation, Kubernetes services and many others can, in the same way, be automated to allow self-service provisioning. For legacy users, this dramatic shift in automated provisioning can sometimes be hard to fathom. The newer generations of developers will soon expect automated provisioning and will have a hard time understanding why things used to take so long to provision. Again, DevOps is simply the way things are done now.

Building and utilizing the IDP

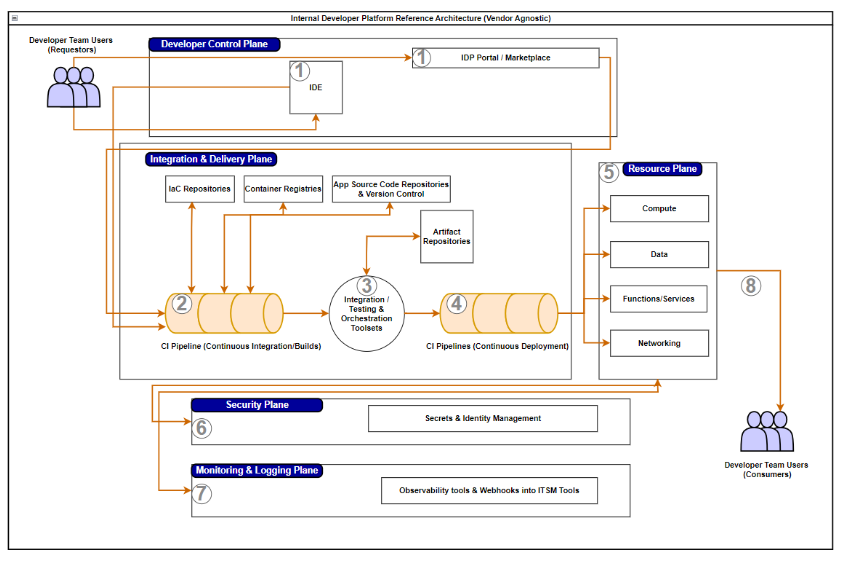

The reference architecture illustration below is one example of a basic internal developer platform. This is intended to provide a basic understanding of what services can be automated and extended to the developer user communities via self-service, as well as the flow of how requests are handled by the various pieces and parts of the IDP. Once the users have access to the portal, all that is required for use is an understanding of the underlying technologies, and of course, a charge code!

Seven steps to success

Here’s a chronological walk through each step shown in the above diagram:

- Developers and dev team users are enabled to connect to the IDP self-service capabilities either via IDE tool integration, or via a front-end GUI portal. The portal provides a marketplace-like view of all products and services available for consumption by the dev team (and any other relevant team(s), for that matter).

- Users provide necessary input populating environment variables, and other metadata required for the provisioning and deployment automation to run. Build pipelines handle these requests, each pipeline having been developed specifically for the products and services it manages.

- NOTE – it is in this step where the pipelines access IaC repositories, container registries and other source code repositories, each of which maintains its own version control and ‘n, n-1, and n-2’ instances of the objects’ source code. A rolling set of versions is maintained for rollback and backward-compatibility purposes.

- Orchestration tools provide the abstraction layer that manages provisioning requests and manages all integration and automated testing functions as the new object(s) are provisioned, and code builds occur. It is in this step where, once build artifacts have passed all testing and migrations to higher environments, these artifacts are also added to their respective artifact repositories for use in version control and re-deployment as may be necessary by downstream processes.

- Deployment pipelines are initiated when all necessary approvals are provided, resulting in the potential for continuous deployments to production environments.

- In this step, recently deployed infrastructure code and the underlying cloud resources are configured with their required compute, data, services and network layer components.

- Security tooling is configured, new workflows and infrastructure are added to existing security scanning tools, and other security features are configured.

- In parallel with step six, new workflows and infrastructure are added to existing observability toolsets and monitoring/alerting services become available to manage the new objects.

Once all these steps are completed, user credentials and other details are provided to the requestor, notifying them that their new infrastructure or services are available for consumption.

Platform engineering practices and IDPs can provide modern enterprises with competitive advantages enabling rapid innovation and fostering growth. The velocity that can be achieved while maintaining a secure environment at scale provides a compelling value proposition.

Technology leaders need to be keenly aware of the benefits platform engineering principles can provide and should seriously consider adopting this latest evolution of Agile and DevOps. The journey to realize these benefits can be challenging, and realistic expectations should be considered with regards to implementation timelines and costs, but the ROI will make senior leadership ask, “Why haven’t we done this before?”

To learn more about our technology strategy solutions, contact us.