As part of an organization’s SAP S/4HANA journey, or really any enterprise system implementation project, the lack of attention paid to data is an all-too-common pitfall. While it is understandable that project teams focus the majority of their attention on the functional build and testing requirements that are typically core to a program’s success, the lack of attention on data can – and usually will be – the death of a project, regardless of how well the system is constructed. If the implementation has a strong testing program, data issues can often be discovered during testing and before go-live. However, this is very late in the program, and will often require a delay to correct data issues prior to switching to the new system. We strongly recommend that companies lay the foundation for a strong data conversion strategy well in advance of the start of the implementation. If data preparation and data conversion is addressed and well planned prior to the start of the project, then one of the key risk areas for most projects will be largely taken care of before resource bandwidth is stretched thin. This approach is hugely beneficial to any project team and will be a driving factor in the overall success of the program.

Assess Data Quality

The first step to tackling data preparation for an S/4 implementation is taking stock of the data quality in the current system. Analyzing existing master data pain points is always a good idea, including issues with the chart of accounts, customer master, vendor master, etc. If migrating from ECC to S/4, it is also important to develop a strategy for those specific master data elements that structurally differ between the two systems. An example of this is customer and vendor master data, which are represented as distinct data types in ECC, but have been consolidated to a single business partner concept in S/4. Another important assessment to perform in the existing environment involves taking inventory of all reports used by the organization and determining which will be needed in the S/4 future state environment. As part of this effort, the organization should not only determine which legacy custom reports can be replaced by native S/4 reporting or analytics capabilities, but also what additional reports or new analytics strategies need to be supported. The organization should also gain an understanding of challenges with data and reporting outside of core systems (e.g., through spreadsheets). These are important considerations in order to structure data appropriately, and to streamline reporting in S/4. This assessment will also help to inform changes that may need to be made to data elements and structures or what additional tools may be needed to meet future reporting and analytics needs.

Prep the Data

After getting a feel for the current system’s data landscape, it’s time to start thinking about how to prep this data for the eventual migration to S/4. Often, there are unrealistic expectations of high data quality in the existing system. However, upon deeper inspection, it’s not uncommon to find many or all of the following issues:

- lack of clear data definitions and/or data standards

- poor data quality (e.g., duplicate, inactive, or incomplete data)

- historical business process changes that have impacted data in the legacy system that have not been addressed with subsequent system changes

Additionally, it is important to understand customizations that have been made to data in the existing system, as well as optimization points. The project team should ask: what can be archived or eliminated? It is also critical to make sure that the S/4 migration includes plans for the future state of the business, and not solely focusing on what the business looks like today. With all of these factors in mind, the next step in preparing data for migration is to set up a strategy and plan to cleanse the data prior to conversion. There are many data cleansing methods out there, and it’s critical to understand the tools that the organization may already own that can help with this. For example, there may be SAP licenses sitting on the shelf for products like SAP Data Services or SAP Information Steward, both of which are very effective tools for data cleansing.

Data Cleansing

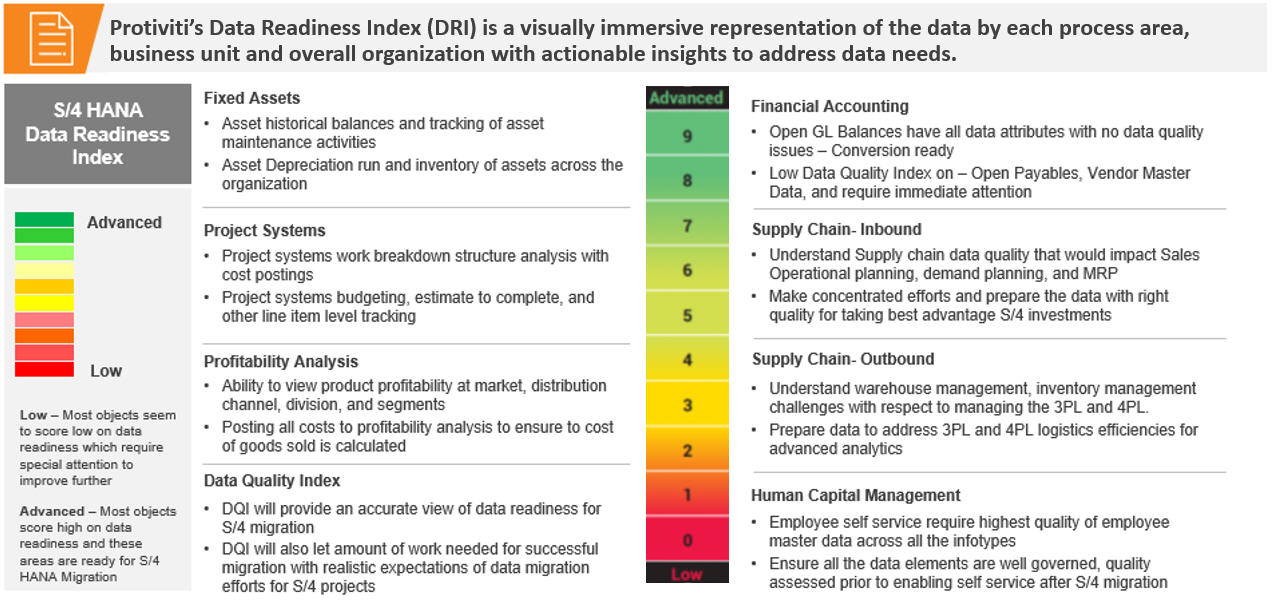

Data cleansing is one of several critical activities that will need to take place pre-migration. Pre-migration activities focus on planning for the conversion by looking at the extraction process. Once an effective extraction process has been determined, the next step is data profiling and cleansing. The strategy here should not lose sight of the relevant business processes and in fact, those processes should drive the strategy around data profiling and cleansing for key master data elements. For example, the project team should look at how customer and material master data impact the order to cash process, and then design business rules that will be used in assessing data quality based on the output of that analysis. In this way, data quality checks are customized to be specific to the business. Determine which attributes of master data drive system behavior, then tailor the data quality rules to enforce business rules on these crucial SAP master data fields. A remediation plan and data quality scorecards, such as a data readiness index (DRI), should be instituted to manage the process.

Once the data quality assessment and cleansing steps are complete, the final step in the pre-migration process is to perform data mapping from the old data structures to the to-be structures in S/4. The migration itself will consist of developing the conversion process (business rule analysis), testing the conversion process (data transformation and remediation) and the conversion execution (data load).

Post-Implementation Data Governance

After go-live, it is of paramount importance to ensure that data governance processes are in place to maintain quality data moving forward. Going live with clean data is a great start, but if that data starts to degrade over months or years, the organization will be in the same spot that it wanted to avoid at the time of go-live. Efficient processes, clear data quality rules, and defined stewardship of the master data will put the organization in the best position to avoid data issues in the future. These governance elements are also important to define during and even before the implementation, if possible, and can be solidified after the system is live, although don’t wait long to do so.

Data First

In summary, ensure that data is at the top of the priority list going into an S/4 implementation, right alongside the functional and business process considerations that are likely already there by default. Bad data will always impact the quality and timeliness of any S/4 implementation project. Whether the organization is coming from ECC or a non-SAP legacy environment, it is cheaper and simpler to address data readiness before the project begins rather than trying to address it during the project. Organizations that are already past this point can still course correct and properly address data concerns during the implementation or after go-live, but these will almost certainly be more expensive and time consuming. Addressing data early will set everyone up for a successful S/4 project.

To learn more about our SAP capabilities, contact us or visit Protiviti’s SAP consulting services.