Modern Analytic Operating Models, DevOps and Data Literacy Initiatives for SAP Analytics

This is the first of several posts that focus on improving data literacy, at a business line and enterprise scale, using technology in the SAP ecosystem.

A Chief Data Officer (CDO) or other senior analytics leadership role sits at the intersection of data processing, capture, storage, governance and utilization. Of these responsibilities, utilization stands out; the others are mechanisms and controls used to achieve the goal of utilization. The term utilization is a bit misleading in this context, which is why ‘data monetization’ is occasionally used. This term helps extend beyond use to value or return on investment (ROI). This is the core purpose of an enterprise’s analytical assets. Analytical asset examples are SAP BW/4HANA, Data Warehouse Cloud, HANA, Data Services, BusinessObjects, Analytics Cloud, Leonardo, Integrated Business Planning and a number of other technologies.

As one can glean from the diverse SAP product examples above, data utilization can be furthered in many ways. Some of the above applications provide end-users greater access to information with self-service data visualization experiences. Some provide advanced processing capabilities to highly specialized use-cases such as Artificial Intelligence or Machine Learning. Others enable distributed collaboration for demand/supply forecasting needs. Still others are focused on streamlining the acquisition, storage and transformation of data for the other applications described above. One of the core challenges today is the range of specialized skills and knowledge that would be necessary to cover all these utilization scenarios… and we have not even begun to scratch the surface on the content of the data!

Coincidentally, I have found that the fundamental goals established in bestselling books such as Stephen R. Covey’s The 7 Habits of Highly Effective People or David Allen’s Getting Things Done are similar to our overarching goals with data utilization: we need to achieve better business outcomes through streamlined work methods. This message is often lost in a web of technology details, never reaching the end-users and stakeholders.

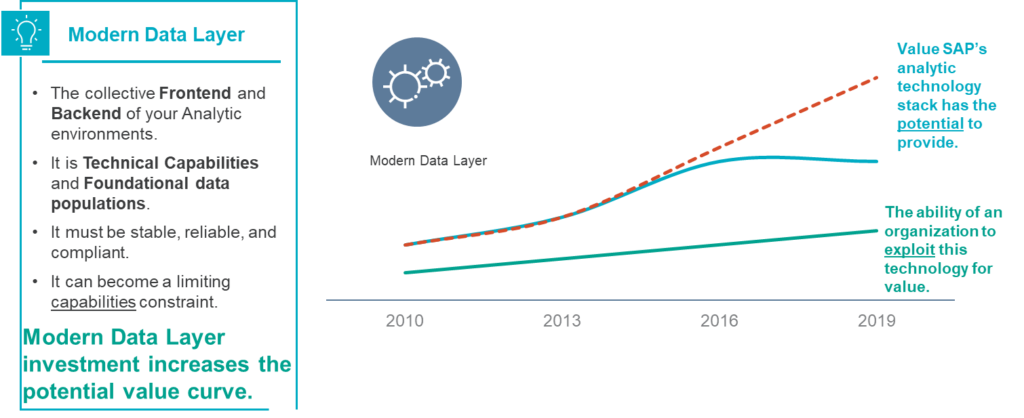

Envision a chart with two diverging lines, one representing maximum potential utilization/monetization value and the other representing an organization’s current ability to exploit analytic technologies for value. The difference, or delta, between these lines represents the organization’s opportunity to improve data utilization/monetization by improving data literacy.

Reducing this divide has rapidly become an important organizational imperative over the last five years. In a 2019 survey by NewVantage Partners, 48% of organizations self-reported that they compete and/or differentiate based upon data and analytics. There is also a general sense of missing out or being left behind. Data-driven or insight-driven competitors represent a C-Suite fear in 75% of organizations and just under 31% of these executives identify their organization as being data-driven.

The Right Ingredients and the Right Skills

The tendency is to jump into HOW to solve this problem, but it would be a mistake not to discuss WHO the users are and their specific needs. To illustrate the challenge, if we were to provide the recipe, could an end-user create a gourmet meal? For some home cooks, yes, but they are likely to have an interest in, passion for or experience cooking. Other users may lack the necessary knife skills or cannot acquire the ingredients. They may not have skills or equipment necessary to prepare the meal. If they were unaccustomed to the culinary style, they may not be able to discern the overall quality or intention of the recipe. This is similar to the challenges for many self-service analytic users.

The self-service end-user (home cook) may lack the necessary technical (knife) skills or security access (ingredients). They may not have acquired cooking skills (data visualization, storytelling/communication, analysis techniques). They may not have deep enough contextual business process and functional knowledge (culinary style, vision). Yet we expect these end-users to just generate gourmet meals, as if it should just happen, without any supportive programs, methods and tools that build foundational knowledge for the enterprise.

If the expected outcome were refined to require dozens of diverse gourmet meals in an evening, such as in a fine dining restaurant, the division of individual activities, quality of ingredients, critical path of the processing steps, access to specialized appliances and techniques, and most importantly, vision and communication become critical to success. Professional chefs’ knife skills are simply assumed.

Consider a modern analytic approach to data monetization, literacy and utilization to have three primary pillars and foundational operating models: initiatives, projects and the modern data layer.

Initiatives: Focus on business user (home cook) enablement for self-service. They teach and nurture capabilities and skills. This is the tide that will raise all ships. They bend the ability curve upward.

Projects: Focus on enabling engineers and developers (the professional chefs) to drive advanced analytic techniques and approaches into the business processes using innovative managerial approaches and technologies. This will also bend the ability curve upward. They will be the first constrained by the modern data layer and outdated DevOp approaches.

Modern Data Layer: This is the collective frontend and backend of the analytic environment. It is the technology stack that provides the potential value curve to the other functions and serves as the audit, cost-controls and overall standardization and compliance gatekeeper. This function is unique as it bends the potential value curve.

In an upcoming blog, I will dive deeper into the initiatives pillar. If you are interested in learning more about this topic, please feel free to contact me by email to discuss further. I also host a recurring SAP Analytics data literacy focus and user group. If you would like to collaborate with peers and thought leaders in a structured way, please reach out for an invitation to this group.

To learn more about our SAP and Intelligent Technologies capabilities, contact us or visit Protiviti’s SAP consulting services.